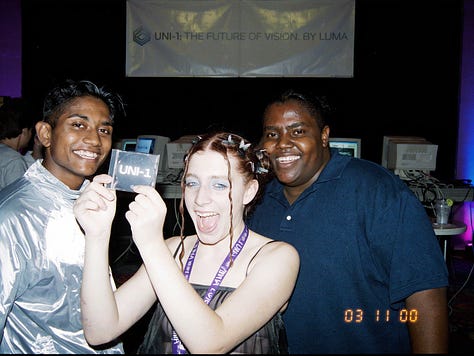

Luma Uni-1 is now available via Partner Nodes

An autoregressive image model that reasons before it draws — unified text-to-image and editing, with state-of-the-art results on visual reasoning benchmarks.

Luma’s Uni-1 is now available in ComfyUI via Partner Nodes. Unlike most image models, Uni-1 isn’t a diffusion model — it’s a decoder-only autoregressive transformer that treats text and images as a single interleaved sequence, jointly modeling time, space, and logic in one architecture. The result: a model that reasons about your prompt before generating — decomposing instructions, resolving constraints, and planning composition like a frontier LLM.

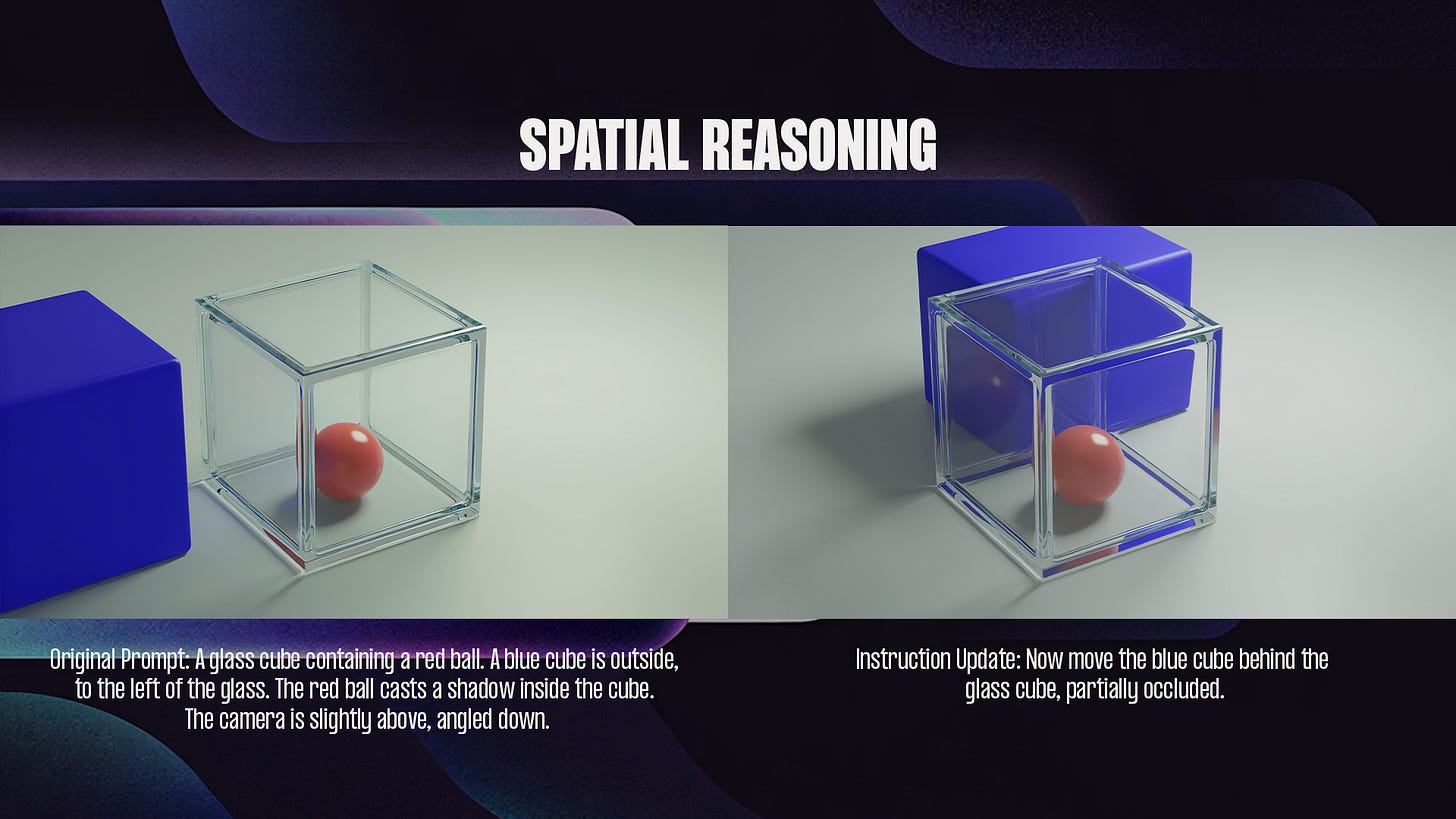

Built around reasoning, not just generation

Most image models go straight from prompt to pixels in a single step. Uni-1 works differently. It thinks first, then draws.

Because Uni-1 is autoregressive and treats text and images as the same kind of sequence, it can reason through your prompt before generating anything. It breaks the instruction into parts, works out the tricky bits (how many of something, what goes where, any logical conditions), and plans the composition — all before committing to pixels.

Features

Photorealism with material accuracy

Text rendering that’s actually readable

Reference-guided generation with identity preservation

Image editing and multi-turn refinement

Multi-panel output with temporal consistency

Web search grounding

Multilingual prompts

Output flexibility

Uni-1 supports 9 aspect ratios ranging from 3:1 ultra-wide panoramic banners down to 1:3 ultra-tall portrait formats, with everything in between — 16:9, 1:1, 9:16, 2:3, and more.

Getting Started

Update ComfyUI to the latest version, or access Comfy Cloud.

Find the Luma UNI-1 Image node via the Node Library, or load a template from the Templates panel.

Drop in your prompt (and a

sourceimage if you’re editing), connect outputs, and run.

UNI-1 joins the growing roster of image models available through ComfyUI Partner Nodes. Try it out and let us know what you build.

Don't forget to look under the 'model' drop-down menu for the 'Uni-1-max' model.

Very interesting - thank you!